It is the proactive right of individuals to own, control, and secure their personal data across AI, IoT, and online platforms.

What Digital Privacy Means in 2026

Surprise, but most Americans (92%) are worried about their online privacy, yet only 3% know how privacy regulations work. This unawareness explains a lot when you see all the data breaches that happen daily, resulting in millions of dollars lost.

Data is a very valuable commodity today; every app you download, every search you make, and every image you scroll by generates data that a company wants to acquire and use for profit. Now that the cost of an average data breach in the United States is $10.22 million, the stakes are higher than ever for getting privacy right.

KEY TAKEAWAYS

- In the USA, about 20 states have passed strong privacy laws, with California taking the lead with the most fines.

- Small steps, like multi-factor authentication, can prevent greater risks.

- AI has been suspected by many companies as can steal or keeping the data.

Privacy Rules Are Everywhere Now

Twenty states have passed comprehensive privacy laws at this point. California’s been leading the charge, slapping Tractor Supply with a $1.35 million fine after discovering its opt-out form didn’t actually do anything. Regulators have moved past writing rules and started enforcing them aggressively.

The regulatory maze creates real problems for businesses trying to stay compliant across different jurisdictions. Delaware, Minnesota, and New Jersey dropped their nonprofit exemptions recently. Universal opt-out signals like Global Privacy Control are now required in multiple states. Companies that ignore these signals are finding themselves on the wrong end of enforcement actions.

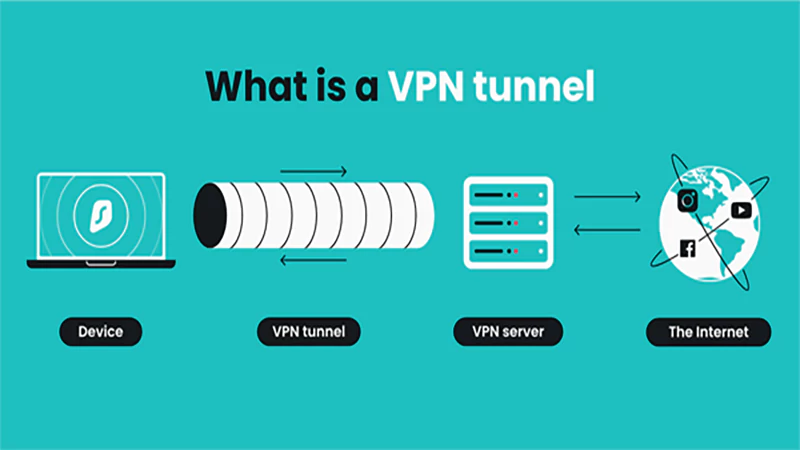

For regular people concerned about their data, a private vpn service offers a straightforward way to encrypt browsing data and hide IP addresses on public networks. That matters because coffee shop Wi-Fi and hotel connections remain easy targets for anyone trying to intercept traffic.

Over in Europe, the GDPR just hit its tenth anniversary. Regulators there have handed out more than €5.65 billion in fines since the law took effect. The EU’s AI Act kicks into full enforcement this year too, banning things like mass facial recognition scraping.

Actually Protecting Yourself

Most people never audit their app permissions. Your phone probably has apps accessing your location, contacts, and microphone right now for no good reason. Taking ten minutes to review those settings can eliminate a surprising amount of data leakage.

Password habits remain terrible across the board. The International Association of Privacy Professionals found that 68% of consumers worry about their online privacy. Yet tons of people still use the same password everywhere. That’s basically handing over the keys to every account you own.

While two-factor authentication (2FA) is beneficial, it is important to select an authenticator application instead of using text message codes. Text message codes have a much higher chance of being intercepted by anyone than most individuals know. And yeah, keeping your software updated sounds boring, but those patches close security holes that attackers actively hunt for.

The AI Problem Nobody Wants to Talk About

AI runs on data. Massive amounts of it. And a lot of that data comes from personal information: medical records, financial histories, behavioral patterns. Software updates, while not the most exciting things to do, include security patches that seal up gaps in software that attackers actively look for.

The IAPP has started focusing heavily on AI governance alongside traditional privacy work. Under the EU AI Act, organizations using AI need to document their risk assessments, keep activity logs, and maintain human oversight. Getting this wrong triggers fines up to 7% of global revenue.

Trust in AI sits pretty low right now. About 70% of people don’t believe companies will use artificial intelligence responsibly. That skepticism seems justified given how opaque most AI systems remain about their data handling.

What Consumers Actually Want

Data subject requests shot up 246% between 2021 and 2023. People want to see what information companies have on them, and they’re exercising their legal rights to access, change, or delete that data. This trend isn’t slowing down.

Companies that brush off privacy concerns pay for it beyond just regulatory fines. Research shows 87% of consumers would walk away from a business that mishandled their data. Privacy isn’t just about compliance anymore (it’s become a genuine competitive advantage).

Kaspersky’s security team makes a good point: protecting privacy takes both technical tools and smarter habits. Encrypted connections help. So does being stingier about what you share and which apps get access to your stuff.

Where This Is All Headed

Companies that want to build real trust with consumers are treating privacy as a true priority, not just a box to check off. Companies that provide clear explanations of what they do with your data, give you working opt-out buttons, and truly respect your choices build trust.

Consumers also play an integral role as they should educate themselves on where their data goes, stop providing unnecessary information, and utilize any tools available for privacy protection. Privacy is not just given to you with laws; it is under your control based on daily personal choices.

What is digital privacy in 2026?

Why is it crucial?

It prevents misuse of data for AI profiling, identity theft, or financial fraud in a hyper-connected world.

What is the core principle at present?

Data belongs to the user, not the organization; companies are merely custodians with strict, accountable obligations.